Researchers at the Department of Physics have today published a paper reviewing the use of artificial intelligence and machine learning for understanding "extreme physics" – the physics of matter at extremely high temperatures and densities. This area of physics is crucial for our understanding of astrophysics, nuclear fusion, and many areas of fundamental physics. 'The data-driven future of high-energy-density physics', is published in Nature, and describes successes to date of the use of artificial intelligence in this field, as well as looking forwards to what might be possible in the future.

"Extreme physics" conditions typically produce highly nonlinear plasmas, in which several phenomena that can normally be treated independently of one another become strongly coupled. These nonlinearities and strong couplings makes them very difficult to understand theoretically or to optimise experimentally – a fundamental challenge in the field.

Reshaping our exploration

In their paper, the researchers argue that machine learning models and data-driven methods are in the process of reshaping our exploration of these extreme systems. From a fundamental perspective, our understanding can be improved by the way in which machine learning models can rapidly discover complex interactions in large datasets. From a practical point of view, the newest generation of extreme physics facilities can perform experiments multiple times a second (as opposed to approximately daily), thus moving away from human-based control towards automatic control based on real-time interpretation of diagnostic data and updates of the physics model.

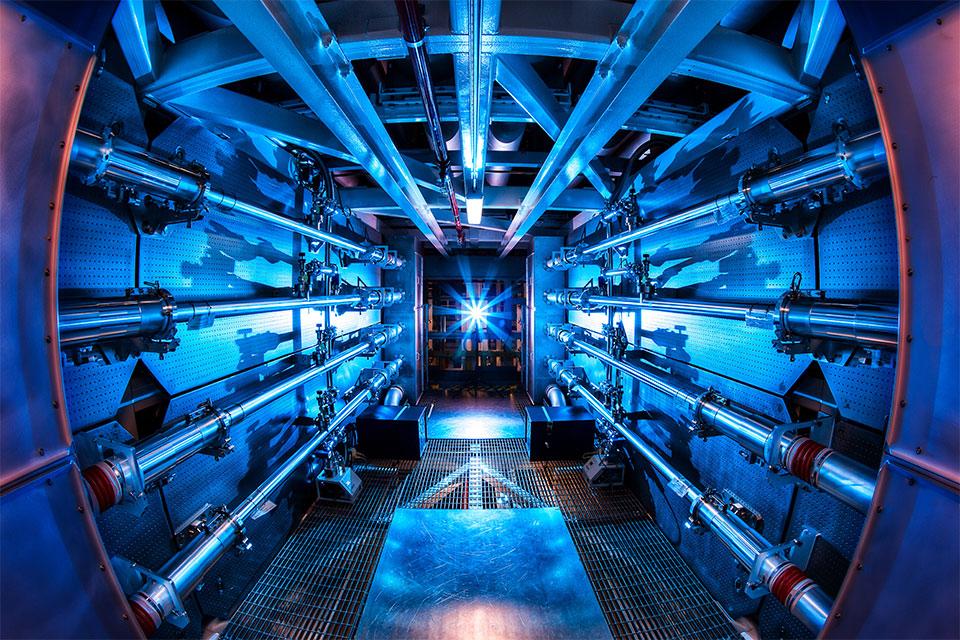

The paper discusses three main case studies. Firstly, combining data from both laboratory astrophysics (high-powered laser experiments that can create conditions similar to inside stars on Earth) with data from astronomical observations in a consistent statistical model eg Nagayama et al (2019). Secondly, inertial confinement fusion – training algorithms to understand our current understanding of the physics, but to be able to "update" their beliefs when they see the data from new experiments, and suggest new designs eg Peterson et al (2017). Finally, the application of AI to high repetition rate lasers, where an algorithm is control of the laser and choses what experiment to do to extract the most information from the experimental setup eg Shalloo et al (2020).

Recommendations for high data rate high-energy-density physics

The group identifies a number of recommendations for the field to best make the use of the vast amounts of data that are soon to be produced:

- Researchers should think carefully about how best to use their data: what methods and diagnostics they can use to take the best data, get sensible uncertainties, and coherently combine with other datasets

- Awards of experimental time and instrument construction should also include greater support for uncertainty quantification, building synthetic diagnostics and data analysis

- Plasma physics graduate education and national laboratory training programmes should begin to include basic data science courses

- Researchers should try where possible to practice open science best practice; making code and data available publicly, using shared data standards between different facilities.

The paper was led by Dr Peter Hatfield, Hintze Fellow in the Department, in collaboration with Dr Jim Gaffney and Dr Gemma Anderson at Lawrence Livermore National Laboratory in California. Oxford academics Dr Charlotte Palmer (now at Belfast) and Professor Steven Rose were also co-authors of the perspective, and leading research from several other academics in the department, including Dr Muhammad Kasim, was highlighted in the review.

The paper resulted from a meeting, Extreme Physics, Extreme Data, at the Lorentz Centre, University of Leiden, 13-17 January 2020, and was supported by the Dutch Research Council (NWO), the University of Leiden and John Fell Oxford University Press (OUP) Research Fund.